Stephen Roddy

Home

Research CV

Music & Sound

Project Overviews

Pure Profile

Linktree

Bluesky

Generative Music Systems

Research Line Overview

I have been interested in generative music since first encountering them at undergraduate level devising a technique for hand-crafted generative music udring my Final Year Project. Since that tiem a good deal of both my artistic practice and scholarly research output has involved generative music systems to some degree, both in my long running Signal to Noise Loops project, and more recently my development of Generative Sonification Techniques for Syntheitc Virological Data. Generative music systems are also key concerns in my Cybernetic AI/Ml and Comms Network Sonification research.

Signal to Noise Loops Project

Signal to Noise Loops emerged from a broader project entitled ‘Auditory Display for Large-scale IoT Networks’ carried out at the CONNECT Centre Trinity College Dublin.

The project integrates open data from Internet of Things (IoT) sensor networks in Dublin, Ireland, in a series of experimental music performances and installations. Each piece treats the city, as mediated by the data it produces, as a collaborator in a musical or sonic work. The project is realized through a bespoke generative music system that continues to adapt and expanded as the project evolves. The system is designed in line with principles from the field of Cybernetics to integrate with both the city and the human performer. Thus, the project links electronic music/sonic performance, IoT/Smart City Data, Generative Music techniques, and Cybernetics. Each performance or installation draws data from networks of IoT devices placed around Dublin City. Sensor and network data are mapped to control the parameters of a given performance/installation. How this takes place is mediated by the generative music system. The state of the system is determined by the state of Dublin city, as represented through the IoT sensor data. The system’s state in turn determines the musical choices it makes while improvising alongside a human performer. Each performance with the system is unique as it represents a complex array of data relations that describe the state of Dublin City and any given time. The project involved the iterative development of the system with each performance acting as an evaluation after which the system would be expanded and further refined.

Iteration 1: Evolving Feedback Loops, Cellular Automata, and Direct Mapping

The first iteration of the project was explored with reference to the concepts of the feedback loop and cellular automata. It explored how both systems can be applied in a generative manner and mapped noise data from a sensor network to drive a generative music system the implemented these ideas. The resulting performance combined human-in-the-loop guitar-based improvisation with data-driven sound manipulation in the context of a live real-time performance.

Iteration 2: Generative Systems, Musical Interactions, and Richer Data

Iteration two introduced additional data sources (e.g. air pollution and water level measurements etc.) to create more complex musical outputs. The cellular automata were replaced with ‘decision loop’ structures that monitor the inputs of the performer and city respectively and decide how to respond. Musical information was input via Lemur over OSC and synthesised with a wavetable algorithm creating a richer and more intricate musical landscape.

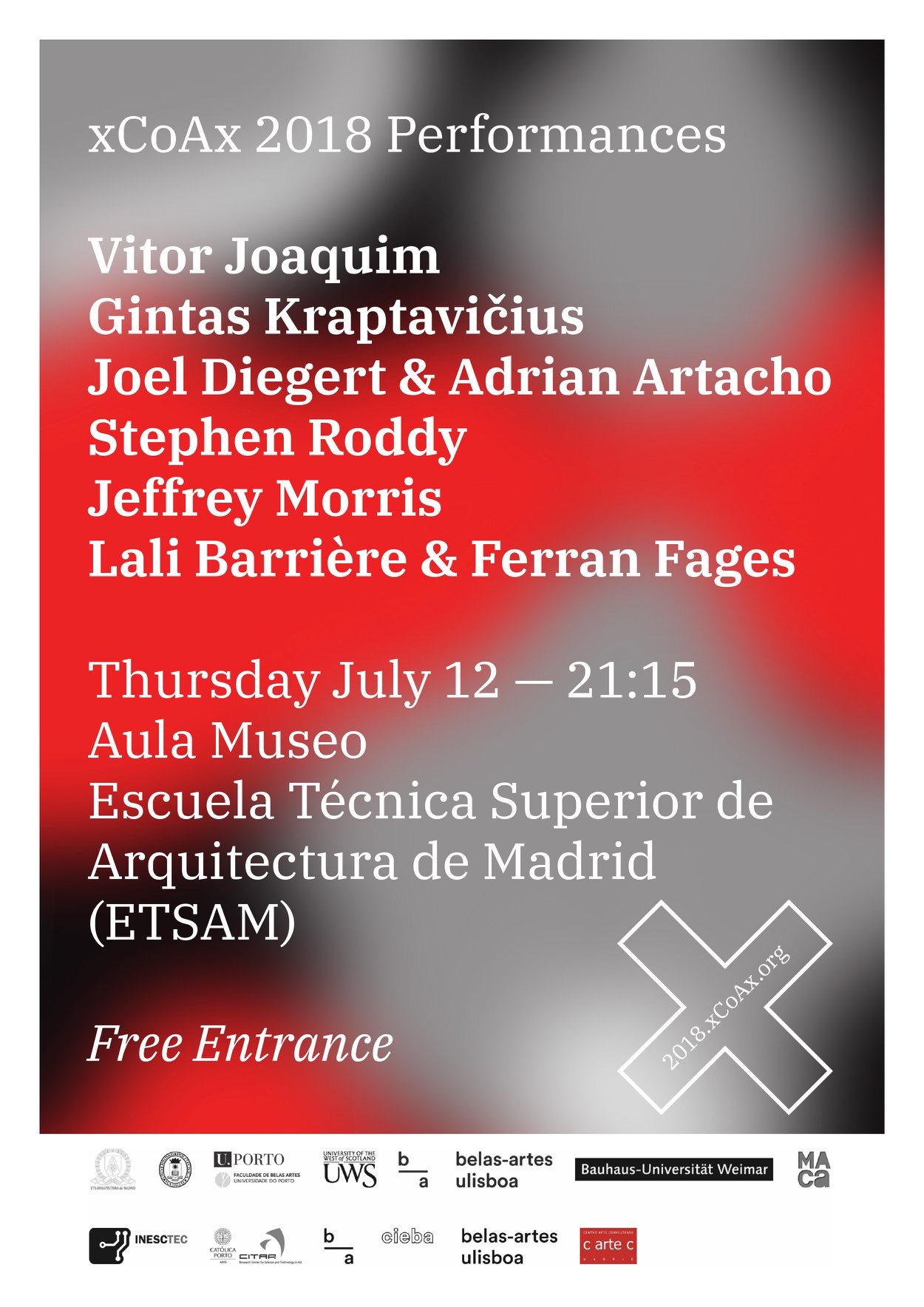

xCoAx paper describing earlier iteration of the system:

Iteration 3: Toward Emergence through Eurythmia

The third iteration integrated further smart city data sources (e.g. pedestrian and vehicular traffic flow, weather data, and emergency warnings). This iteration ceded much more control to the city and it defined increasingly larger proportions of each performance. The performer still inputs musical information as a starting point for the system but the system will always evolve and iterate over these inputs.

Iteration 4: COVID-19 Crisis Response: Audiovisual Installation

The fourth iteration of the systems represented an almost complete surrender of control from the performer. Commissioned during COVID-19, this installation was completely online with no live element and utilized machine learning techniques in the generative music system. Data was also visualized with noise values expressed as changes in the parameters of a dot-matrix map of Dublin City.

The piece was performed at the 2021 New York Electroacoustic Music Festival. You can find the video and concert program below:

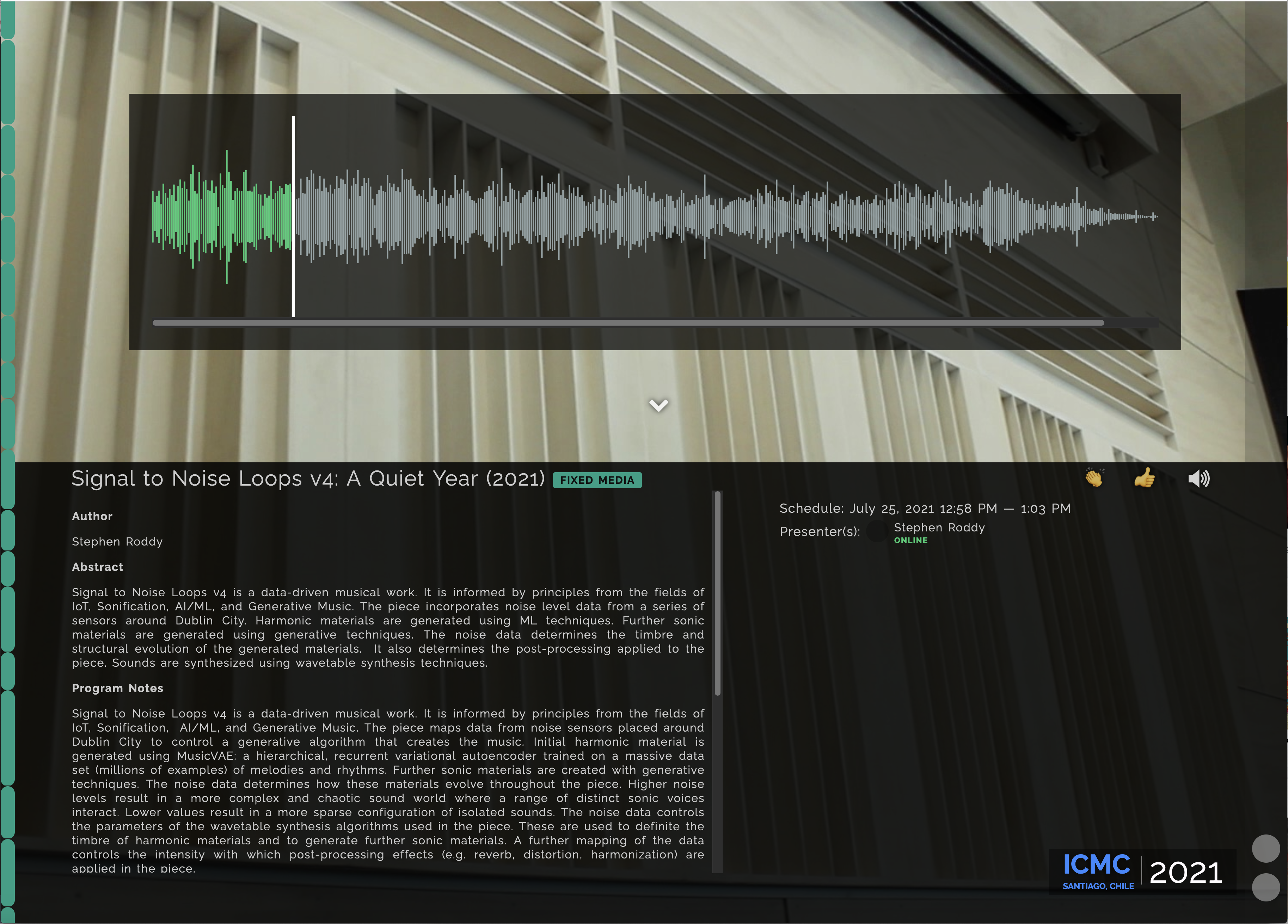

The sonic component of Signal to Noise Loops V4 was installed in the listening room at the International Conference on Computer Music in Santiago Chile in July of 2021.

International Computer Music Conference 2021

Signal to Noise Loops V4 was performed at the 2021 Audio Mostly Conference at the University of Trento, Italy.

The sixteenth edition of Culture Night / Oíche Chultúir will take place on Friday 17 September 2021. Signal to Noise Loops V4 will be presented as an online installation for Oíche Chultúir Bhaile Átha Cliath 2021 (Dublin City Culture Night).

Signal to Noise Loops V4- Dublin City Culture Night

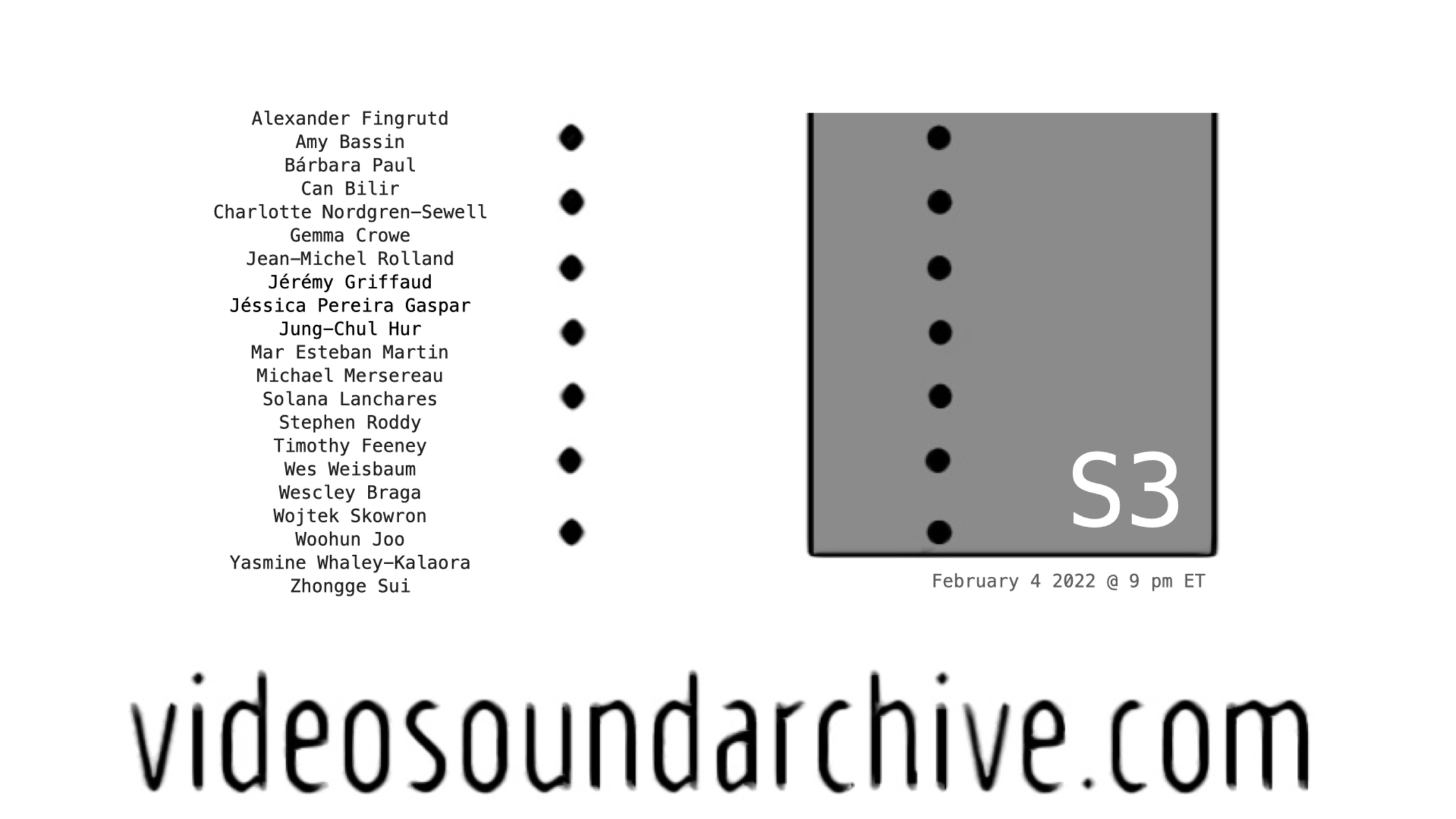

Signal to Noise Loops v4 was also featured in Season 3 of the Video Sound Archive. The Video Sound Archive is an online working archive, dedicated to video and sound that emerged during the pandemic. It provides an alternative to festival and exhibition spaces that accounts for the realities of experiencing art in a time of COVID.

Signal to Noise Loops v4- Video Sound Archive S3 - February 2022

Signal to Noise Loops v4 was selected for performance at the concerts for the 27th International Conference on Auditory Display (ICAD 2022), where it won the award for the Best use of sound in a Concert Piece or Demonstration. You can see the conference paper describing some of the technical development of this specific iteration here:

Leonardo Article

For a more in-depth discussion of the first four iterations of this project please see my article in Volume 56 Issue 1 of Leonardo where they are analyzed and contextualized in detail.

Iteration 5 & 5.1:

Iteration five continued this surrender of authorship. It was commissioned for the 2022 Earth Rising festival taking place at the Irish Museum of Modern Art in the wake of the COVID lockdowns and was designed for mobile and smart devices. It juxtaposed data from during and after the pandemic using an update of the generative music system designed for iteration four, and a new visualisation system. It was followed by iteration 5.1 in 2023 which was installed at the Ubiquitous Music Symposium in Derry and explored the dissolution of the hybrid digital-physical computing practices that had defined life during the pandemic.

Technical Advancement: Controlling the Proportion of Information Present in a Sonification Signal.

From time to time in the development of a complex creative and technical project, problems will emerge that require the design of a bespoke solution which may hold some novelty in and of itself. In the case of this project, one such problem was the need to control the proportion of information present in a sonified signal at any given time so that the data could be ‘turned up or down’ in the same manner that the volume might be.

This is a tricky problem as the information in a given sonification is a function of the mapping of the data to acoustic parameters and the ensuing perception and interpretation of those acoustic pressure waves as sounds by the listener.

However, I found that implementing a pre-sonification smoothing filter on the data in a time series sonification smoothes out rapidly varying components of the data, essentially reducing the proportion of information reaching the listener’s ears. I formalized this solution and created a technical implementation in Max/MSP that can be integrated into sonification production/performance workflows in Max and Ableton Live.

A key advantage of this approach to controlling the proportion of information in a sonification is that turning up the data allows you to zoom in on a data set with a sonification to hear small detailed changes. While turning down the data allows you to zoom out to get a sense of evolving trends at higher levels.

You can read more in the paper below, which was presented at ICAD 2022.

Performances:

- Earth Rising Eco Festival - IMMA 2022

- The 27th International Conference on Auditory Display Concert

- Signal to Noise Loops v4- Video Sound Archive S3 - February 2022

- Signal to Noise Loops V4- Dublin City Culture Night

- 2021 Audio Mostly Conference

- International Computer Music Conference 2021

- 2021 New York Electroacoustic Music Festival

- Signal to Noise Loops 3++ @ ISSTA 2018, Derry, September 2018

- Signal to Noise Loops i2+: Noise Water Dirt @ CSMC 2018, Dublin, August 2018

- Signal to Noise Loops i++ Live @ xCoAx 2018, Madrid

- Noise Loops for Laptop, Improvised Electric Guitar and Dublin City Noise Level Data @ Sonic Dreams 2017, Sonic Arts Waterford, September 30th 2017

Signal to Noise Loops Album

Bacteriophage in Granular Waves

Bacteriophage in Granular Waves is a musical work and performance that adopts data-driven composition methods, generative systems and sonification techniques to produce music from a synthetic virology dataset. The piece was debuted for AM.ICAD 2025 at Coimbra, Portugal. It has emerged from a larger collaboration exploring creative and artistic strategies for the visualization and sonification of virology data. It is an early result from an ongoing interdisciplinary research collaboration with Professor Liam Fanning and colleagues across UCC’s College of Medicine and Health, which aims to develop novel tools and strategies for representing complex virological data with creative technologies.

Tags

Generative Music. Signal to Noise Loops. Cybernetics. Rhythmanallysis. Emergence. IoT Data. Smart Cities. Machine Learning. Cellular Automata. Evolutionary Algorithms.

Hosted on GitHub Pages — Theme by orderedlist

Page template forked from evanca